As data privacy regulations tighten and organizations grow more cautious about sharing sensitive information, machine learning teams are rethinking how they train models. Traditionally, training requires centralizing data in one location—often a cloud server or data lake. However, this practice introduces significant privacy, security, and compliance risks. Federated learning offers a compelling alternative by allowing models to be trained across decentralized data sources without moving the raw data itself.

TLDR: Federated learning enables machine learning models to be trained without centralizing sensitive data. Tools like PySyft, TensorFlow Federated, and Flower allow organizations to keep data local while sharing only model updates. This approach enhances privacy, improves regulatory compliance, and reduces the risks associated with data breaches. As privacy-first AI becomes a priority, federated learning frameworks are becoming essential tools for modern ML teams.

At the center of this shift are tools like PySyft, an open-source framework designed to enable privacy-preserving machine learning. Alongside other federated learning platforms, PySyft allows developers to train models collaboratively across multiple devices or institutions—without compromising sensitive data.

Contents

- 1 What Is Federated Learning?

- 2 Why Centralized Data Training Is Problematic

- 3 PySyft: A Privacy-Preserving Machine Learning Framework

- 4 Other Popular Federated Learning Tools

- 5 Comparison Chart of Leading Federated Learning Tools

- 6 How Federated Learning Preserves Privacy

- 7 Use Cases Across Industries

- 8 Challenges and Limitations

- 9 The Future of Privacy-Preserving AI

- 10 FAQ

- 10.1 1. What is federated learning in simple terms?

- 10.2 2. How does PySyft differ from other federated learning tools?

- 10.3 3. Is federated learning fully secure?

- 10.4 4. Can federated learning be used in production?

- 10.5 5. What industries benefit most from federated learning?

- 10.6 6. Does federated learning reduce regulatory risks?

- 10.7 7. Is federated learning only for large companies?

What Is Federated Learning?

Federated learning is a distributed machine learning technique where multiple participants train a shared model collaboratively while keeping their data localized. Instead of sending raw data to a central server, each participant:

- Downloads a shared global model

- Trains it locally on their private dataset

- Sends only model updates (such as gradients or parameters) back to the central coordinator

The coordinator then aggregates these updates to improve the global model without ever seeing the underlying data.

This structure significantly reduces the exposure of personal or regulated data while still enabling collaborative model improvement.

Why Centralized Data Training Is Problematic

While centralized training has dominated machine learning workflows, it comes with real challenges:

- Data privacy concerns: Health, financial, and personal records are highly sensitive.

- Regulatory restrictions: Laws like GDPR, HIPAA, and CCPA restrict cross-border or third-party data transfers.

- Security risks: Centralized databases are attractive targets for cyberattacks.

- Data ownership barriers: Organizations may refuse to share proprietary data assets.

Federated learning frameworks aim to solve these challenges by keeping data in its original location while still enabling collaborative insights.

PySyft: A Privacy-Preserving Machine Learning Framework

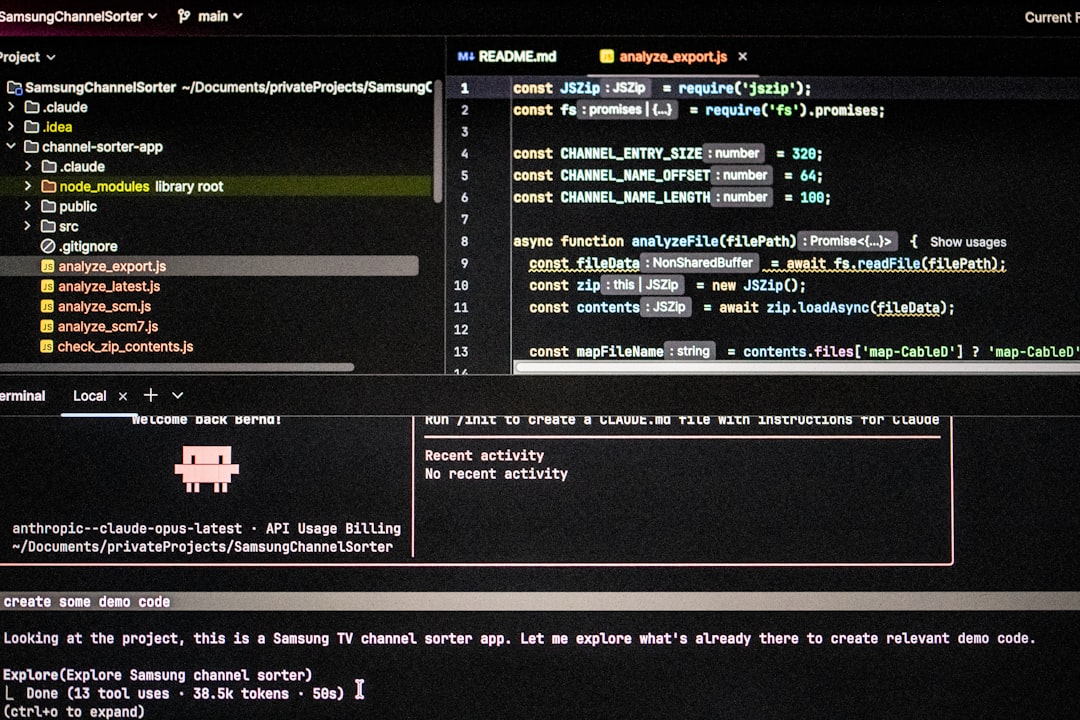

PySyft, developed by OpenMined, is one of the most recognized tools in privacy-preserving AI. Built on top of PyTorch, it extends deep learning workflows to support:

- Federated learning

- Secure multi-party computation

- Differential privacy

- Encrypted computation

PySyft introduces abstractions that allow data scientists to send models to data rather than bringing data to the model. This inversion of traditional workflows is key to maintaining privacy.

Key Features of PySyft

- Remote execution: Models can train on remote devices or servers.

- Privacy-enhancing techniques: Built-in support for differential privacy mechanisms.

- Encrypted computation: Secure model training even when collaborators do not trust each other.

- Integration with PyTorch: Familiar APIs for developers.

Because PySyft integrates directly with PyTorch, machine learning engineers can adapt existing workflows rather than redesigning them from scratch.

Other Popular Federated Learning Tools

While PySyft is powerful, several other frameworks serve different use cases. Choosing the right tool depends on scalability requirements, ecosystem preferences, and production readiness.

1. TensorFlow Federated (TFF)

Developed by Google, TensorFlow Federated is designed for large-scale federated learning experiments and research. It integrates tightly with TensorFlow and supports simulation environments for testing federated algorithms.

2. Flower (FLWR)

Flower is a flexible and framework-agnostic federated learning platform that supports PyTorch, TensorFlow, and other ML libraries. It is production-oriented and simplifies deployment across distributed environments.

3. NVIDIA FLARE

Focused on enterprise-grade and healthcare applications, NVIDIA FLARE provides robust orchestration and scaling support for multi-institution collaborations.

4. OpenFL

Initially developed by Intel, Open Federated Learning (OpenFL) is geared toward enterprise and research settings, especially in regulated industries.

Comparison Chart of Leading Federated Learning Tools

| Tool | Primary Ecosystem | Privacy Features | Production Ready | Best For |

|---|---|---|---|---|

| PySyft | PyTorch | Differential privacy, secure computation | Research to mid-scale | Privacy-first AI research |

| TensorFlow Federated | TensorFlow | Federated aggregation | Research-focused | Large-scale simulation |

| Flower | Framework-agnostic | Customizable privacy layers | High | Cross-framework deployment |

| NVIDIA FLARE | Enterprise AI | Secure orchestration | Enterprise-grade | Healthcare and enterprise |

| OpenFL | Enterprise research | Secure aggregation | Enterprise-ready | Regulated industries |

How Federated Learning Preserves Privacy

Federated learning alone does not automatically guarantee privacy. However, it significantly reduces exposure risks. Privacy is enhanced through:

- Local data retention: Raw data never leaves the device or institution.

- Secure aggregation: Servers receive only aggregated model updates.

- Differential privacy: Noise can be added to updates to prevent inference attacks.

- Encryption: Updates can be transmitted in encrypted form.

These mechanisms protect against common threats such as data breaches or model inversion attacks.

Use Cases Across Industries

Healthcare

Hospitals can collaboratively train diagnostic models without sharing patient records. This is particularly valuable in rare disease research where datasets are small and geographically distributed.

Finance

Banks can improve fraud detection systems across institutions while complying with financial regulations that restrict data sharing.

Mobile Devices

Smartphones can update predictive text, recommendation systems, and voice recognition models without uploading personal user data.

Manufacturing

Factories in different regions can train predictive maintenance models using localized machine data without exposing proprietary operational insights.

Challenges and Limitations

Despite its promise, federated learning is not without complications:

- Communication overhead: Frequent parameter updates can strain networks.

- Heterogeneous data: Data distributions may vary significantly between participants.

- Device reliability: Edge devices may disconnect during training.

- Complex orchestration: Coordinating distributed training workflows can be difficult.

Frameworks like PySyft address some of these issues, but deploying federated learning at scale requires careful engineering.

The Future of Privacy-Preserving AI

Federated learning tools are rapidly evolving. As privacy regulations become more stringent and public awareness increases, decentralized AI training is likely to transition from optional innovation to standard practice.

Emerging developments include:

- Federated analytics for privacy-safe insights without full model training

- Improved secure aggregation protocols

- Integration with blockchain for auditability

- Standardized compliance frameworks

Tools like PySyft are paving the way for a model training paradigm where collaboration does not require compromising control of sensitive information.

FAQ

1. What is federated learning in simple terms?

Federated learning is a method of training machine learning models across multiple devices or institutions without moving their data to a central location. Only model updates are shared.

2. How does PySyft differ from other federated learning tools?

PySyft emphasizes privacy-enhancing technologies like differential privacy and secure multi-party computation. It is especially strong for research and experimentation in privacy-first AI systems.

3. Is federated learning fully secure?

While federated learning reduces data exposure, it must be combined with secure aggregation and differential privacy techniques to guard against potential attacks.

4. Can federated learning be used in production?

Yes. Tools like Flower, NVIDIA FLARE, and OpenFL are designed for production environments. PySyft is often used in research but can support practical deployments as well.

5. What industries benefit most from federated learning?

Healthcare, finance, telecommunications, mobile applications, and government sectors benefit significantly due to strict data privacy requirements.

6. Does federated learning reduce regulatory risks?

Yes. Because raw data stays within its original jurisdiction or organization, federated learning can help organizations comply with regulations like GDPR or HIPAA.

7. Is federated learning only for large companies?

No. Open-source tools like PySyft and Flower make federated learning accessible to research institutions, startups, and smaller ML teams.

As data privacy continues to shape the future of artificial intelligence, federated learning tools such as PySyft are redefining how collaboration happens in machine learning—making it possible to build powerful models without ever centralizing the data they rely on.